At a conference last week, NVIDIA and Emerald AI introduced a new approach to powering next-generation AI infrastructure, redefining AI factories as flexible, intelligent grid assets rather than static power loads. The collaboration integrates accelerated computing with real-time energy orchestration, enabling large-scale AI deployments to connect to power grids faster, operate more efficiently, and enhance overall system reliability.

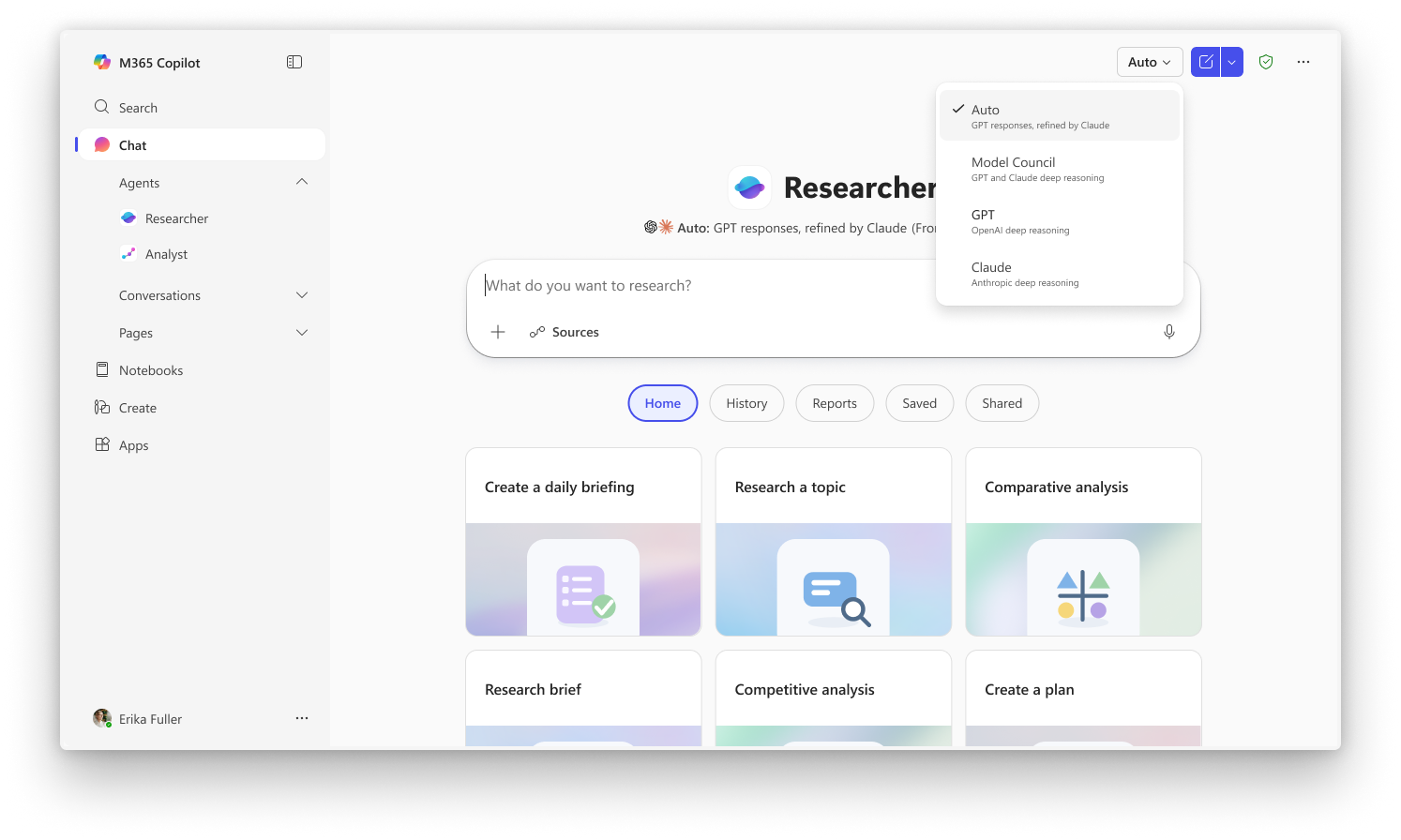

The joint solution is built on NVIDIA’s Vera Rubin DSX AI Factory reference architecture and Emerald AI’s Conductor platform. By combining compute, power networking, and control into a unified system, the model allows AI factories to dynamically adjust their energy consumption in response to grid conditions. This flexibility reduces the need for overbuilding infrastructure to meet peak demand, while maintaining high-performance output in the form of AI-generated tokens.

A group of major energy companies, including AES Corporation, Constellation Energy, Invenergy, NextEra Energy, Nscale Energy & Power, and Vistra Corp are collaborating to develop the generation capacity required to support rapidly increasing AI-driven electricity demand. Their efforts include hybrid energy projects with co-located power sources designed to accelerate deployment timelines while supporting broader grid stability.

NVIDIA founder and CEO Jensen Huang described the emerging infrastructure model as a “five-layer AI cake,” where energy forms the foundational layer. This paradigm emphasises close coordination across energy systems, chips, infrastructure, models, and applications to drive efficiency gains.

Power efficiency is becoming a defining metric for modern AI data centres, with “tokens per second per watt” emerging as a key benchmark. According to Huang, NVIDIA is focusing on “extreme codesign” to significantly improve this metric year over year. The company reports that since the launch of its Kepler GPU in 2012, the number of tokens generated within the same power budget has increased by more than a million-fold, culminating in its latest Vera Rubin platform.

The initiative signals a broader industry shift toward integrating energy intelligence directly into computing infrastructure, an approach that could play a critical role in scaling AI sustainably while maintaining grid resilience.