Meta has announced plans to develop and deploy four new generations of its Meta Training and Inference Accelerator (MTIA) chips over the next two years, significantly speeding up its custom silicon roadmap to support growing artificial intelligence workloads across its platforms.

The chips will be used to power key AI functions, including ranking, recommendations and generative AI (GenAI) systems that underpin the company’s apps and advertising infrastructure.

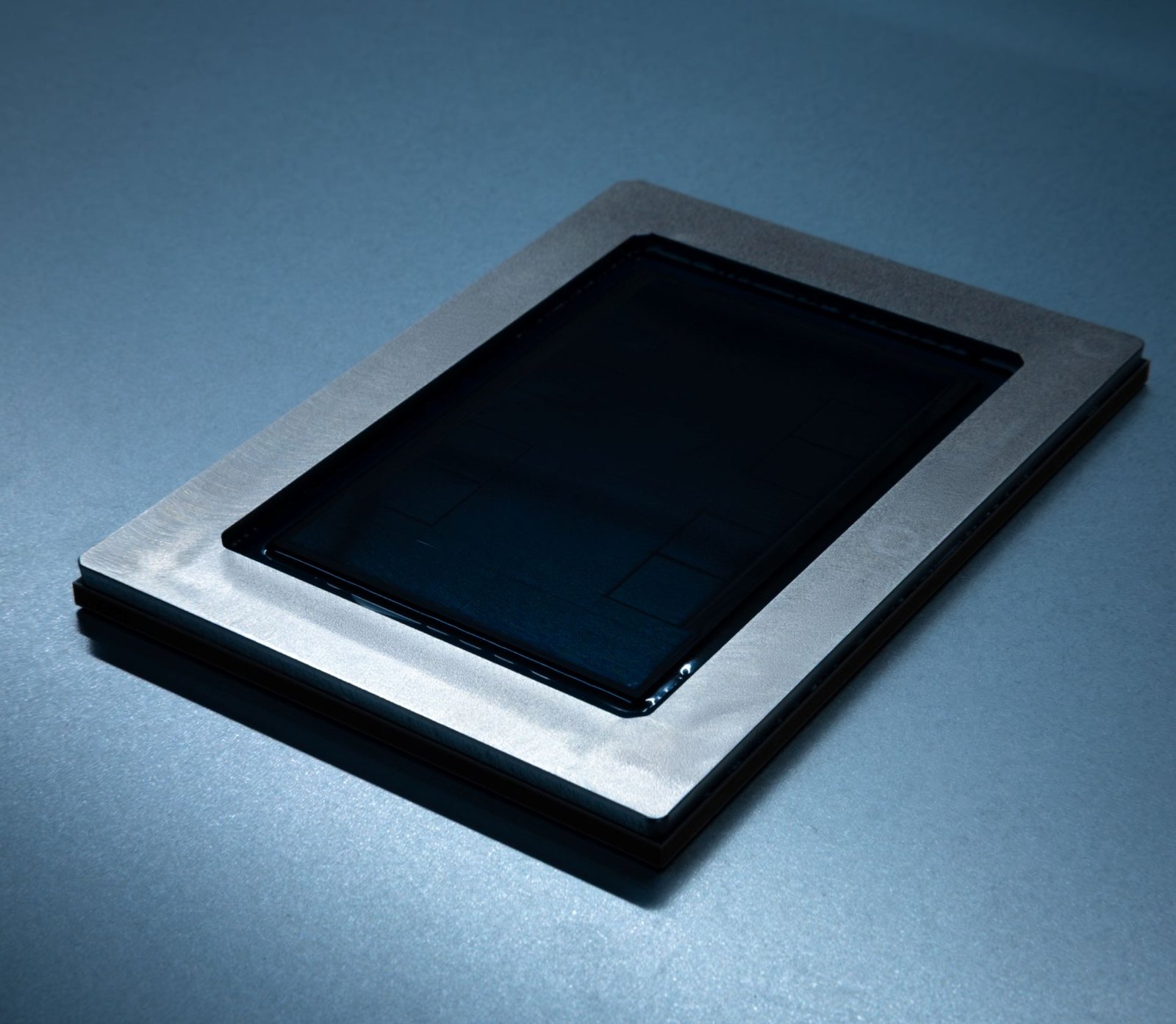

Meta first introduced the MTIA family of custom-designed chips in 2023 to improve the efficiency of training and inference tasks in its AI systems. Since then, the company has deployed hundreds of thousands of MTIA chips across its data centres, primarily for inference workloads powering organic content and advertising recommendations.

According to Meta, the chips are designed specifically for its internal workloads and are part of a broader full-stack approach that integrates hardware, software and infrastructure. This custom design enables higher compute efficiency and lower operational costs compared with general-purpose processors.

As AI workloads continue to expand, Meta said it is adopting a portfolio approach to scaling infrastructure capacity, combining chips from industry partners such as Nvidia with its own MTIA custom silicon, which remains central to the company’s AI infrastructure strategy.

Under the new roadmap, MTIA 300 is already in production and is being used for training systems that power ranking and recommendation algorithms. The next three generations — MTIA 400, MTIA 450 and MTIA 500 — are being designed to support a wider range of AI workloads.

While the future chips will be capable of handling multiple AI tasks, Meta said they will primarily be used for generative AI inference workloads, helping power large-scale AI features across its platforms through 2027.

The accelerated development cycle represents a faster cadence than traditional chip design timelines, reflecting the growing demand for specialised AI hardware as companies race to scale generative AI capabilities.