Researchers from the Joint Research Centre, in collaboration with Engineering Ingegneria Informatica and the Institute of Health and Society, have developed an artificial intelligence-powered system that transforms fragmented disaster-related news reports into structured, actionable knowledge for scientists, policymakers and emergency responders.

Detailed in a new study published in Nature Scientific Data, the innovation uses advanced AI models to analyse millions of news articles and convert them into structured disaster “storylines” and interactive knowledge graphs, offering a new tool for disaster risk modelling, impact analysis and emergency decision-making.

The system addresses a longstanding challenge in disaster management: while climate-related disasters such as hurricanes, floods and wildfires generate vast amounts of media coverage, much of that information remains scattered and unstructured, limiting its usefulness for rapid response and long-term planning.

The newly compiled dataset spans more than 3,000 disaster events across 175 countries between 2014 and 2024, covering 26 different disaster types. According to the researchers, it captures around 80 per cent of global economic losses recorded during the same period by EM-DAT, one of the world’s most comprehensive disaster databases managed by the University of Louvain.

Unlike traditional disaster databases, which primarily catalogue individual events and their immediate impacts, the AI-driven approach is designed to reveal cascading effects and complex interdependencies.

For example, heavy rainfall may trigger flooding, which can then disrupt transportation systems, damage agricultural output and increase the risk of disease outbreaks. By mapping these cause-and-effect relationships visually, the system enables emergency planners to better anticipate secondary consequences and allocate resources more effectively.

The platform operates in two stages. First, using Retrieval-Augmented Generation (RAG), it scans millions of articles from the Europe Media Monitor to identify disaster-related coverage. Large language models running on GPT@JRC then distil the information into structured summaries outlining what happened, who was affected, what triggered the event and how authorities responded.

These summaries are subsequently converted into visual knowledge graphs, offering contextual and causal insights beyond the statistical records available through traditional databases.

Researchers say the approach also helps address reporting imbalances, as disaster coverage has historically focused on sudden-onset events in high-income countries while slower-moving crises such as droughts in vulnerable regions often receive less attention.

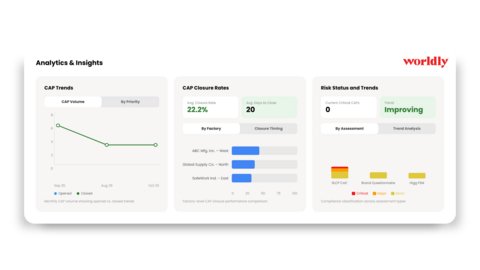

All compiled data, source code and processing workflows have been made openly available, alongside an interactive dashboard that allows users to explore disaster storylines and knowledge graphs directly.

The researchers believe the tool could significantly strengthen global disaster preparedness and resilience by enabling more data-driven decision-making in an era of escalating climate and geological risks.