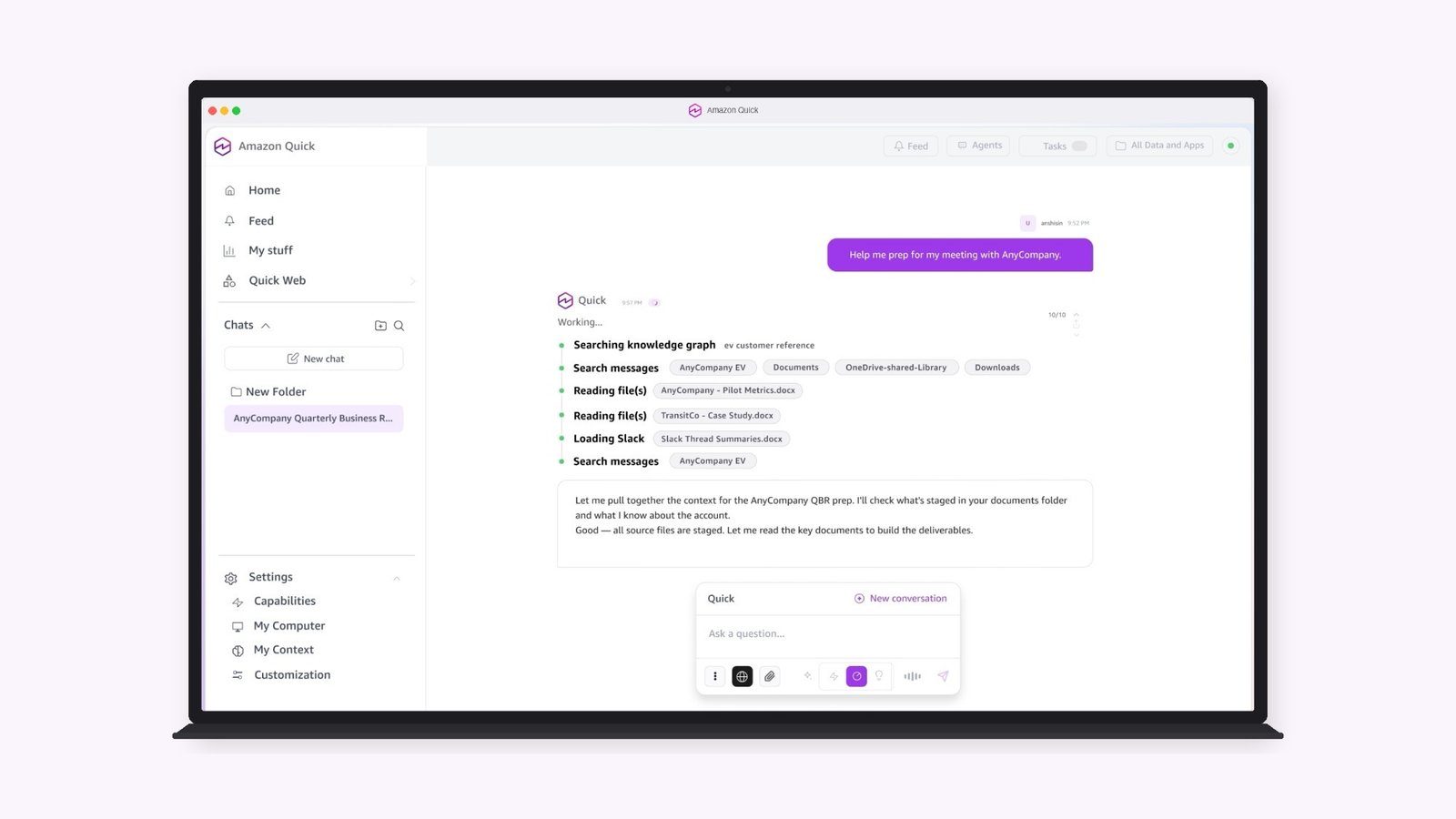

The session titled AI For Societal Value: Responsible Innovation Across Healthcare And High Impact Sectors explored how artificial intelligence is progressing from research and experimentation towards real world deployment capable of creating measurable societal outcomes. Bringing together clinicians, researchers, and academic leaders, the discussion focused on balancing innovation with responsibility as AI systems scale across healthcare and other high impact domains.

Speakers highlighted that sustainable AI adoption requires more than technological capability. It demands ethical governance, regulatory preparedness, and implementation models that prioritise transparency, fairness, and long term public benefit. The conversation examined how responsible innovation frameworks can support both commercial viability and societal value.

Key Themes Discussed

1. From Research To Real World Deployment

Panellists discussed how AI solutions are increasingly transitioning from experimental pilots into operational environments. The session emphasised the importance of validating real world impact, ensuring clinical relevance, and building scalable models that deliver consistent outcomes.

2. Responsible Innovation And Ethical Governance

A key area of discussion centred on ethics, accountability, and trust. Speakers stressed that AI systems must be designed with clear governance frameworks to ensure fairness, transparency, and responsible decision making, particularly in healthcare contexts where outcomes directly affect human wellbeing.

3. Building Long Term Societal Value

The panel explored how AI can generate lasting societal benefits when supported by sustainable commercial models, interdisciplinary collaboration, and policy frameworks that encourage innovation while safeguarding public interest.

Speaker Perspectives

Dr. Arif Ahmed Sekh, UiT The Arctic University of Norway

Dr Sekh highlighted research driven innovation pathways, emphasising the importance of translating academic advances into applied solutions that can be responsibly adopted across healthcare systems.

Dr. Gunjan Gupta Govil, Gunjan IVF World

Dr Govil shared clinical perspectives on AI integration, noting that patient centred deployment and ethical clinical validation are essential for building trust in AI driven healthcare tools.

Dr. Kiran D. Sekhar, Kiran Fertility Clinics

Dr Sekhar discussed how AI can support precision in clinical workflows while emphasising that human expertise remains critical to ensure responsible decision making and patient safety.

Dr. Swarupa Mitra, Fortis Medical

Dr Mitra highlighted the role of governance frameworks and clinical accountability, stressing that AI adoption must align with established medical standards and patient outcome priorities.

Dr. Tanuj Bhatia, SGRR Medical College

Dr Bhatia focused on implementation challenges in academic and clinical environments, emphasising capacity building and interdisciplinary collaboration as important enablers for successful deployment.

Prof. Dilip K. Prasad, UiT The Arctic University of Norway

Prof Prasad emphasised transparency and accountability in AI design, highlighting how responsible innovation principles can guide long term adoption and societal trust.

Strategic Takeaways

-

Responsible innovation is essential for translating AI research into meaningful societal impact.

-

Ethical governance and transparency must be embedded throughout the AI lifecycle.

-

Collaboration between academia, clinicians, and industry can accelerate safe and scalable adoption.

-

Sustainable deployment models are key to ensuring long term value across healthcare and high impact sectors.

Closing Note

The session reinforced that AI’s long term success will depend on balancing innovation with accountability. By combining research insights, clinical experience, and governance frameworks, the discussion outlined pathways for scaling AI solutions that deliver societal benefit while maintaining trust, fairness, and responsible oversight.