OpenAI has announced that it has reached an agreement with the U.S. Department of War (DoW) to deploy advanced AI systems in classified environments, under what it describes as the most comprehensive safety framework yet applied to such collaborations.

The company said the deal establishes three non-negotiable red lines: no use of its technology for mass domestic surveillance, no use to direct autonomous weapons systems, and no use for high-stakes automated decision-making such as social credit–style systems. OpenAI stated that these principles are embedded not only in usage policies but also in deployment architecture and contractual terms.

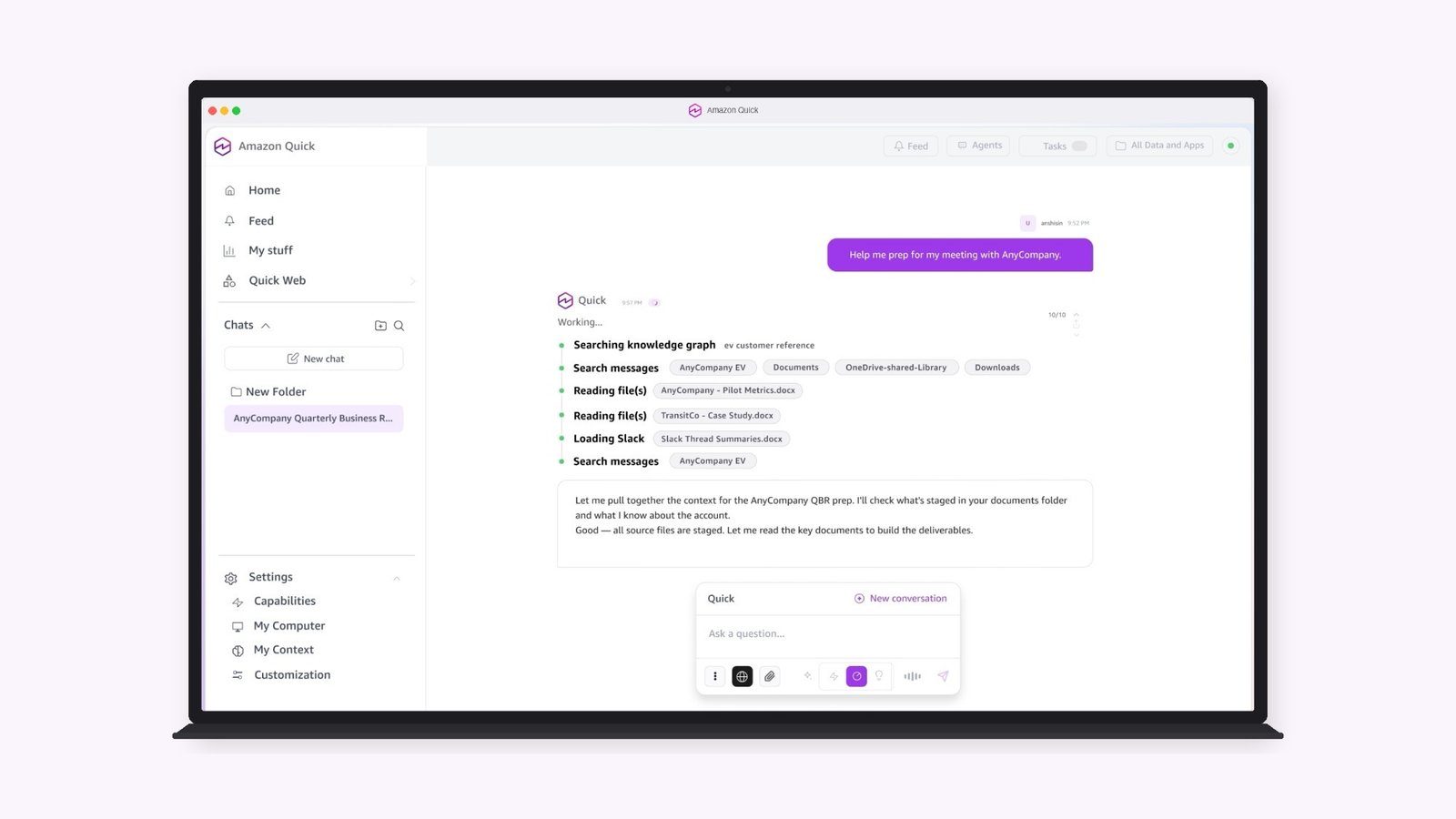

Under the agreement, deployment will be cloud-only, ensuring that models are not installed on edge devices that could enable autonomous lethal weapons. OpenAI will retain full control of its safety stack, including classifiers and monitoring systems, and will not provide “guardrails-off” or non-safety-trained models. Cleared OpenAI engineers and safety researchers will also be directly involved in supporting the deployment.

The contract specifies that the AI systems may only be used for lawful purposes consistent with U.S. law, Department policies, and established oversight protocols. It explicitly prohibits independent direction of autonomous weapons where human control is required and bars unconstrained monitoring of U.S. persons’ private information. Intelligence-related uses must comply with existing statutes, including the Fourth Amendment, the National Security Act, the Foreign Intelligence Surveillance Act, and relevant Defence Department directives.

OpenAI said it sought to balance national security needs with democratic accountability, emphasising that advanced AI tools should support those defending the United States while remaining subject to enforceable safeguards. The company added that it has asked the government to make similar terms available to other AI labs, arguing that broad collaboration with strong guardrails is essential for the responsible integration of frontier AI into national security contexts.